Cloud computing has two meanings. The most common refers to running workloads remotely over the internet in a commercial provider’s data center, also known as the “public cloud” model. Popular public cloud offerings—such as Amazon Web Services (AWS), Salesforce’s CRM system, and Microsoft Azure—all exemplify this familiar notion of cloud computing. Today, most businesses take a multicloud approach, which simply means they use more than one public cloud service.

The second meaning of cloud computing describes how it works: a virtualized pool of resources, from raw compute power to application functionality, available on demand. When customers procure cloud services, the provider fulfills those requests using advanced automation rather than manual provisioning. The key advantage is agility: the ability to apply abstracted compute, storage, and network resources to workloads as needed and tap into an abundance of prebuilt services.

The public cloud lets customers gain new capabilities without investing in new hardware or software. Instead, they pay their cloud provider a subscription fee or pay for only the resources they use. Simply by filling in web forms, users can set up accounts and spin up virtual machines or provision new applications. More users or computing resources can be added on the fly—the latter in real time as workloads demand those resources thanks to a feature known as autoscaling.

Cloud computing definitions for each type

The array of available cloud computing services is vast, but most fall into one of the following categories.

SaaS (software as a service)

This type of public cloud computing delivers applications over the internet through the browser. The most popular SaaS applications for business can be found in Google’s G Suite and Microsoft’s Office 365; among enterprise applications, Salesforce leads the pack. But virtually all enterprise applications, including ERP suites from Oracle and SAP, have adopted the SaaS model. Typically, SaaS applications offer extensive configuration options as well as development environments that enable customers to code their own modifications and additions.

IaaS (infrastructure as a service) definition

At a basic level, IaaS public cloud providers offer storage and compute services on a pay-per-use basis. But the full array of services offered by all major public cloud providers is staggering: highly scalable databases, virtual private networks, big data analytics, developer tools, machine learning, application monitoring, and so on. Amazon Web Services was the first IaaS provider and remains the leader, followed byMicrosoft Azure, Google Cloud Platform, and IBM Cloud.

PaaS (platform as a service) definition

PaaS provides sets of services and workflows that specifically target developers, who can use shared tools, processes, and APIs to accelerate the development, testing, and deployment of applications. Salesforce’s Heroku and Force.com are popular public cloud PaaS offerings; Pivotal’s Cloud Foundry and Red Hat’s OpenShift can be deployed on premises or accessed through the major public clouds. For enterprises, PaaS can ensure that developers have ready access to resources, follow certain processes, and use only a specific array of services, while operators maintain the underlying infrastructure.

FaaS (functions as a service) definition

FaaS, the cloud version of serverless computing, adds another layer of abstraction to PaaS, so that developers are completely insulated from everything in the stack below their code. Instead of futzing with virtual servers, containers, and application runtimes, they upload narrowly functional blocks of code, and set them to be triggered by a certain event (such as a form submission or uploaded file). All the major clouds offer FaaS on top of IaaS: AWS Lambda, Azure Functions, Google Cloud Functions, and IBM OpenWhisk. A special benefit of FaaS applications is that they consume no IaaS resources until an event occurs, reducing pay-per-use fees.

Private cloud definition

A private cloud downsizes the technologies used to run IaaS public clouds into software that can be deployed and operated in a customer’s data center. As with a public cloud, internal customers can provision their own virtual resources to build, test, and run applications, with metering to charge back departments for resource consumption. For administrators, the private cloud amounts to the ultimate in data center automation, minimizing manual provisioning and management. VMware’s Software Defined Data Center stack is the most popular commercial private cloud software, while OpenStack is the open source leader.

Note, however, that the private cloud does not fully conform to the definition of cloud computing. Cloud computing is a service. A private cloud demands that an organization build and maintain its own underlying cloud infrastructure; only internal usersof a private cloud experience it as a cloud computing service.

Hybrid cloud definition

A hybrid cloud is the integration of a private cloud with a public cloud. At its most developed, the hybrid cloud involves creating parallel environments in which applications can move easily between private and public clouds. In other instances, databases may stay in the customer data center and integrate with public cloud applications—or virtualized data center workloads may be replicated to the cloud during times of peak demand. The types of integrations between private and public cloud vary widely, but they must be extensive to earn a hybrid cloud designation.

Public APIs (application programming interfaces) definition

Just as SaaS delivers applications to users over the internet, public APIsoffer developers application functionality that can be accessed programmatically. For example, in building web applications, developers often tap into Google Maps’s API to provide driving directions; to integrate with social media, developers may call upon APIs maintained by Twitter, Facebook, or LinkedIn. Twilio has built a successful business dedicated to delivering telephony and messaging services via public APIs. Ultimately, any business can provision its own public APIs to enable customers to consume data or access application functionality.

iPaaS (integration platform as a service) definition

Data integration is a key issue for any sizeable company, but particularly for those that adopt SaaS at scale. iPaaS providers typically offer prebuilt connectors for sharing data among popular SaaS applications and on-premises enterprise applications, though providers may focus more or less on B-to-B and e-commerce integrations, cloud integrations, or traditional SOA-style integrations. iPaaS offerings in the cloud from such providers as Dell Boomi, Informatica, MuleSoft, and SnapLogic also let users implement data mapping, transformations, and workflows as part of the integration-building process.

IDaaS (identity as a service) definition

The most difficult security issue related to cloud computing is the management of user identity and its associated rights and permissions across private data centers and pubic cloud sites. IDaaS providers maintain cloud-based user profiles that authenticate users and enable access to resources or applications based on security policies, user groups, and individual privileges. The ability to integrate with various directory services (Active Directory, LDAP, etc.) and provide is essential. Okta is the clear leader in cloud-based IDaaS; CA, Centrify, IBM, Microsoft, Oracle, and Ping provide both on-premises and cloud solutions.

Collaboration platforms

Collaboration solutions such as Slack, Microsoft Teams, and HipChat have become vital messaging platforms that enable groups to communicate and work together effectively. Basically, these solutions are relatively simple SaaS applications that support chat-style messaging along with file sharing and audio or video communication. Most offer APIs to facilitate integrations with other systems and enable third-party developers to create and share add-ins that augment functionality.

Vertical clouds

Key providers in such industries as financial services, health care, retail, life sciences, and manufacturing provide PaaS clouds to enable customers to build vertical applications that tap into industry-specific, API-accessible services. Vertical clouds can dramatically reduce the time to market for vertical applications and accelerate domain-specific B-to-B integrations. Most vertical clouds are built with the intent of nurturing partner ecosystems.

Other cloud computing considerations

The most widely accepted definition of cloud computing means that you run your workloads on someone else’s servers, but this is not the same as outsourcing. Virtual cloud resources and even SaaS applications must be configured and maintained by the customer. Consider these factors when planning a cloud initiative.

Cloud computing security considerations

Objections to the public cloud generally begin with cloud security, although the major public clouds have proven themselves much less susceptible to attack than the average enterprise data center.

Of greater concern is the integration of security policy and identity management between customers and public cloud providers. In addition, government regulation may forbid customers from allowing sensitive data off premises. Other concerns include the risk of outages and the long-term operational costs of public cloud services.

Multicloud management considerations

The bar to qualify as a multicloud adopter is low: A customer just needs to use more than one public cloud service. However, depending on the number and variety of cloud services involved, managing multiple cloudscan become quite complex from both a cost optimization and technology perspective.

In some cases, customers subscribe to multiple cloud service simply to avoid dependence on a single provider. A more sophisticated approach is to select public clouds based on the unique services they offer and, in some cases, integrate them. For example, developers might want to use Google’s TensorFlow machine learning service on Google Cloud Platform to build machine-learning-enabled applications, but prefer Jenkins hosted on the CloudBees platform for continuous integration.

To control costs and reduce management overhead, some customers opt for cloud management platforms (CMPs) and/or cloud service brokers (CSBs), which let you manage multiple clouds as if they were one cloud. The problem is that these solutions tend to limit customers to such common-denominator services as storage and compute, ignoring the panoply of services that make each cloud unique.

Edge computing considerations

You often see edge computing described as an alternative to cloud computing. But it is not. Edge computing is about moving local computing to local devices in a highy distributed system, typically as a layer around a cloud computing core. There is typically a cloud involved to orchestrate all the devices and take in their data, then analyze it or otherwise act on it.

Benefits of cloud computing

The cloud’s main appeal is to reduce the time to market of applications that need to scale dynamically. Increasingly, however, developers are drawn to the cloud by the abundance of advanced new services that can be incorporated into applications, from machine learning to internet of things (IoT) connectivity.

Although businesses sometimes migrate legacy applications to the cloud to reduce data center resource requirements, the real benefits accrue to new applications that take advantage of cloud services and “cloud native” attributes. The latter include microservices architecture, Linux containersto enhance application portability, and container management solutions such as Kubernetes that orchestrate container-based services. Cloud-native approaches and solutions can be part of either public or private clouds and help enable highly efficient devops-style workflows.

Cloud computing, public or private, has become the platform of choice for large applications, particularly customer-facing ones that need to change frequently or scale dynamically. More significantly, the major public clouds now lead the way in enterprise technology development, debuting new advances before they appear anywhere else. Workload by workload, enterprises are opting for the cloud, where an endless parade of exciting new technologies invite innovative use.

Source: Infoworld

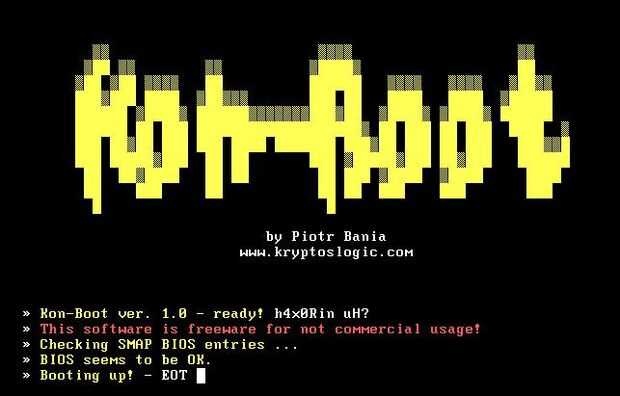

Technically, this website lets you log in to any Windows or Mac Operating system with full rights without prior knowledge of the machine’s password. Konboot is designed primarily for tech repairs, forensic teams, and security audit reasons. Piotr Bania is the mastermind behind this rare tool.

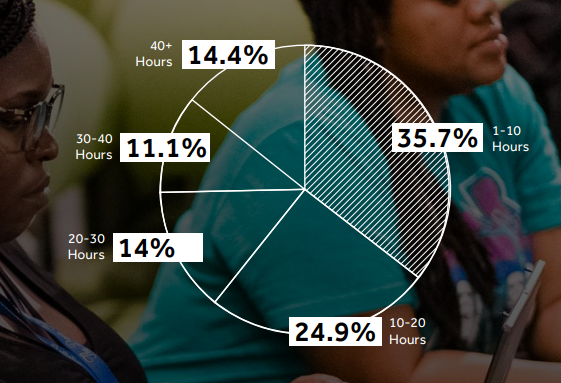

Technically, this website lets you log in to any Windows or Mac Operating system with full rights without prior knowledge of the machine’s password. Konboot is designed primarily for tech repairs, forensic teams, and security audit reasons. Piotr Bania is the mastermind behind this rare tool. Users are asked to perform a specific task that simulates real-world usage of usually a website. These tasks can be as easy as opening multiple pages across a selected website while having a voice and screen capture, A/B tests, preference tests and eventually taking a UI/UX review questionnaire afterward. These tests take less than 10 minutes to complete, no experience is required, and the is no cap on the number of tests a user can take per day.

Users are asked to perform a specific task that simulates real-world usage of usually a website. These tasks can be as easy as opening multiple pages across a selected website while having a voice and screen capture, A/B tests, preference tests and eventually taking a UI/UX review questionnaire afterward. These tests take less than 10 minutes to complete, no experience is required, and the is no cap on the number of tests a user can take per day. Awwwards is the abode of a vast collection of mind-blowing websites across the internet where users not only get a chance to rate them based on design, creativity, and innovation on the internet but also gather unexplored ideas regarding their next projects. Users are also able to query and search directories based on their respective niche as well as hire and apply for website design positions site wide.

Awwwards is the abode of a vast collection of mind-blowing websites across the internet where users not only get a chance to rate them based on design, creativity, and innovation on the internet but also gather unexplored ideas regarding their next projects. Users are also able to query and search directories based on their respective niche as well as hire and apply for website design positions site wide.